You are using an unsupported browser. Please use the latest version of

Chrome, Firefox, Safari or Edge.

Stories

Inspiration. You can find it in every Mass General story. In every hand we hold, and every community partnership and global relief effort we launch. In the face of our newest medical resident and in our latest research breakthrough, decades in the making.

These stories capture how Mass General is harnessing inspiration to make a difference in the lives of patients, families, and our local and global neighbors. Read on. Get inspired. Join us.

Innovation Story

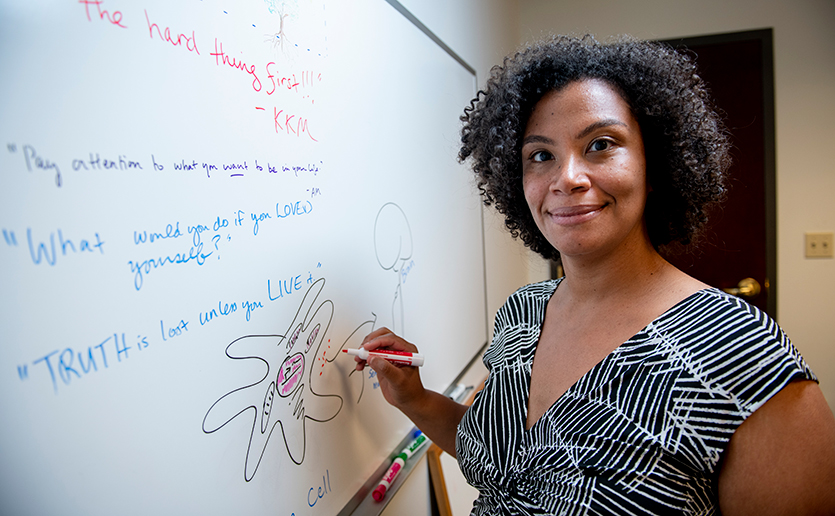

Simmie L. Foster, MD, PhD, Explains Hot and Cool Research

Innovation Story

The Wild West of “Medicinal” Cannabis

Innovation Story

Improving Outcomes for Bipolar Disorder

Profile in Medicine

Using Life’s Experiences to Determine the Meaning of Home

Profile in Medicine

Welcomed, With Open Arms

Innovation Story

Five Things Mass General is Doing to Improve Sustainability

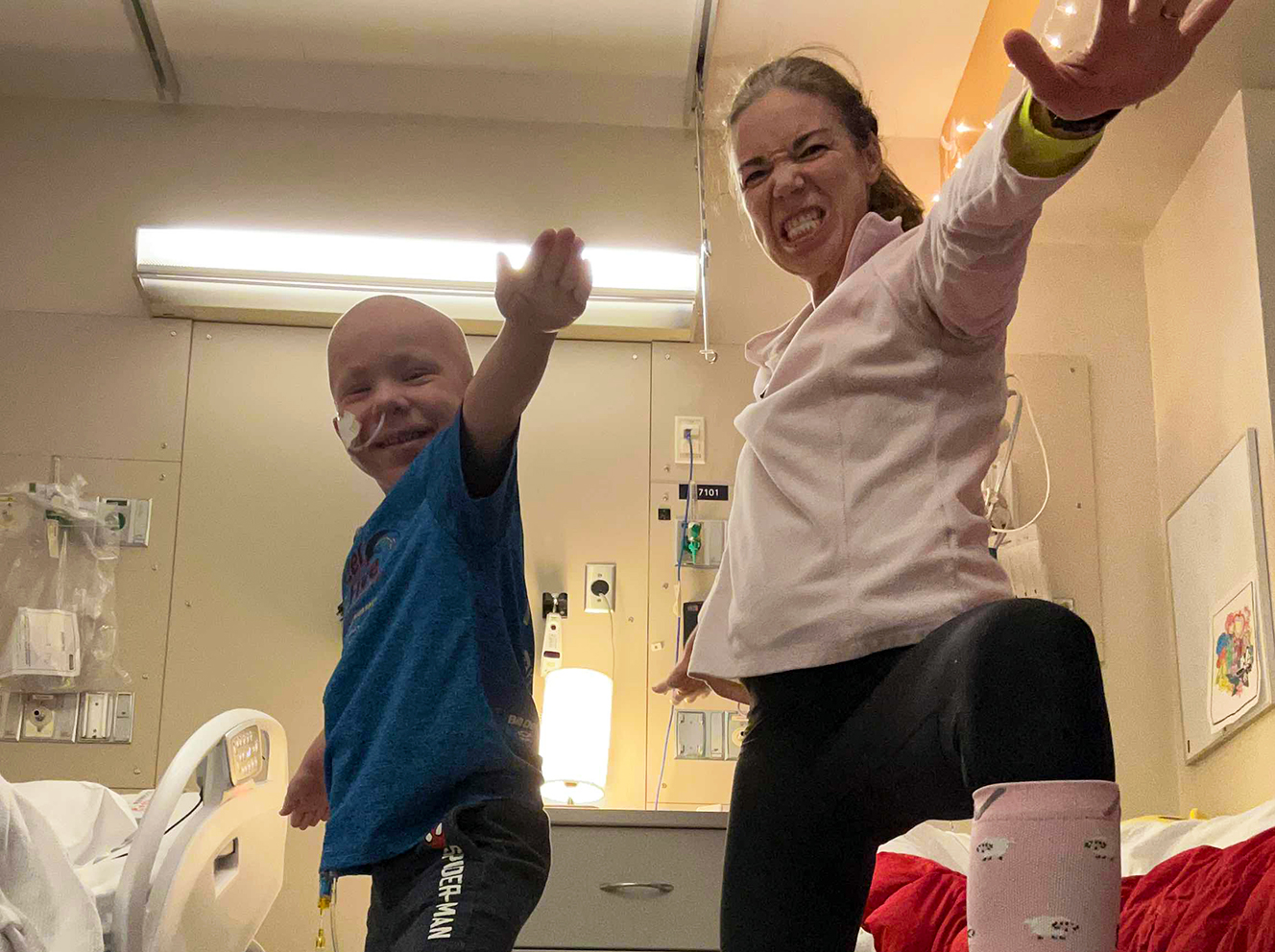

Donor Story

Brave Theo

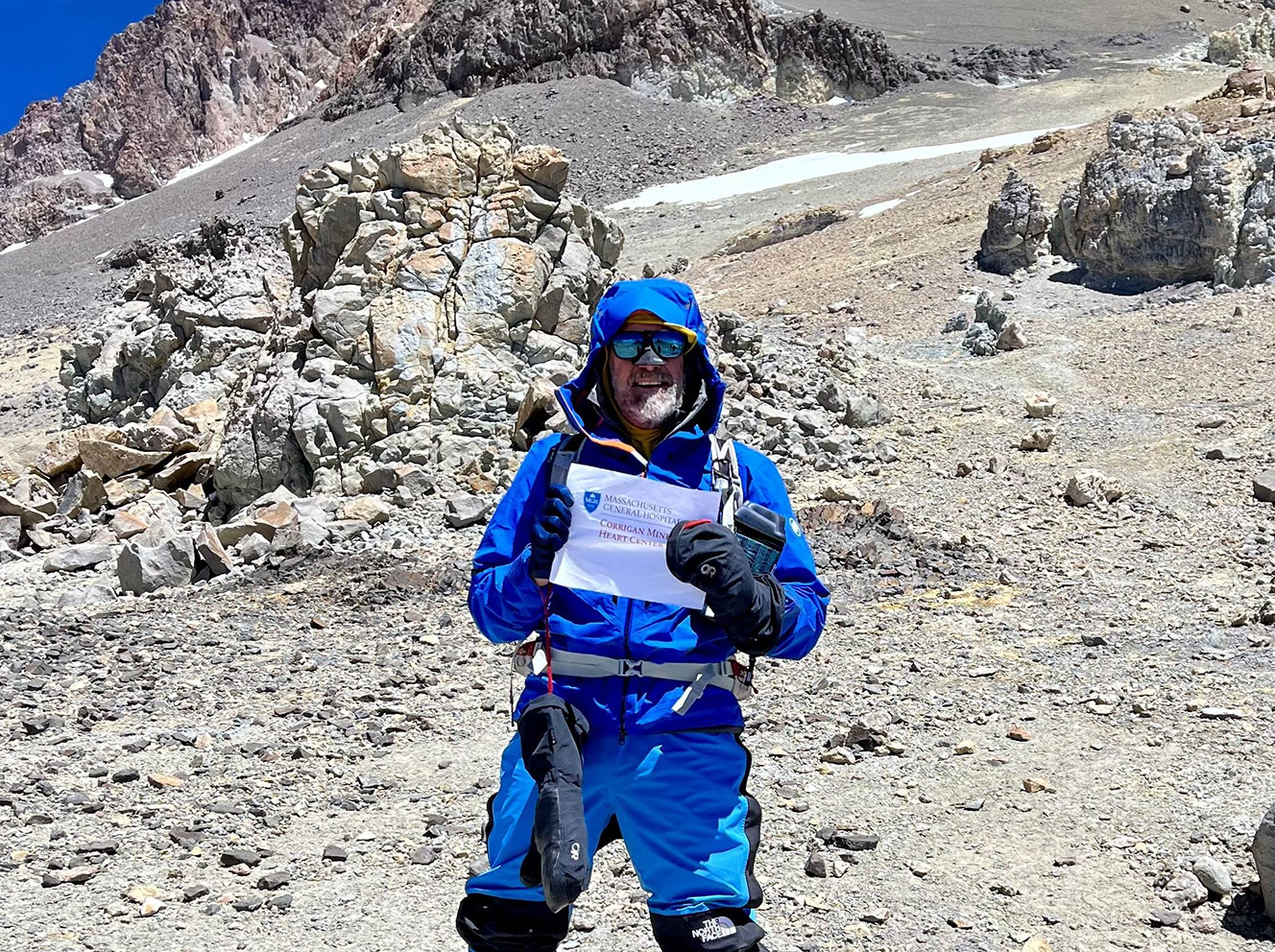

Patient Story

The Heart of an Athlete

Innovation Story

Loneliness: An Unmet Need for Social Connection

Innovation Story